2016: Silicon Cognition

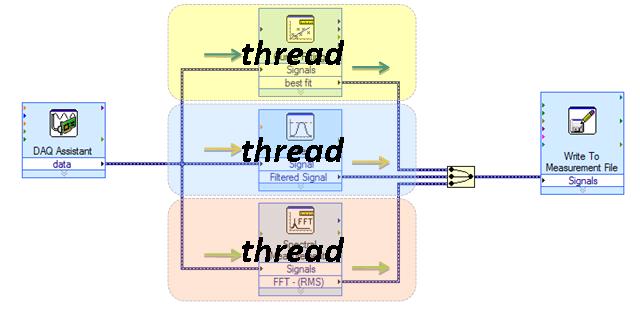

Several years ago I flogged the term “Cloud-Based Episodic Parallel Processing” and tossed c-bepp.org onto the Internet with a concise manifesto of a vision: a cloud API for applications running on PCs and other puny computers to be called when massive computation (or computation on massive amounts of data) is required.

My focus was applications like personal medicine, where a patient’s symptoms and genomic information (for example, the DNA sequence of their tumor) could be correlated against a vast database of similar sufferers, and the best course of therapy for the particular patient selected based on outcomes amassed over time and the spectrum of therapies that had been tried.

I likened the process to a hybrid car, which carries a modest gas engine of barely sufficient power for light-demand usage but also an electric drive to add bursts of power in high-demand situations.

C-BEPP received limited interest at the time— some interesting conversations, some expressions of interest, a few amens— but ultimately it didn’t gel. Wasn’t actionable. The necessary software constructs seemed unapproachable.

But tonight it struck me: in the end, it actually did gel in a sense— with a twist. Driving home, my watch guided me along the roadways, gently tapping me when turns were necessary: tap, tap, tap for right; tap-tap, tap-tap, tap-tap for left. It did this by harnessing a sort of episodic cloud-based processing: Here was the puniest of personal computers, drawing intelligence from a distant cauldron of immense compute power.

The twist is that there is an intermediary I had not foreseen: the smartphone. Powerful computers in their own right by any classical measure, these always-connected, sensor-festooned, multi-networked wonders provide valuable preprocessing and situational information to the distant brain based in the cloud. My phone interacted with me via the watch, and it corresponded with the cloud-brain, serving as interlocutor and conductor of the orchestra of data to and from my wrist.

In return, the cloud-brain gained insight from observing me: knots of traffic I encountered, my speed, diversions and alternate routes I chose to take, and so on. Perhaps even biometric information from my watch caught its interest, since that was available too. Who knows, if I wrenched the wheel sharply, it might even have noted an obstacle in the road.

My eyes were its.

Silicon ganglions

Throughout history, successful innovations have often paralleled biological systems. Airplanes possess similarities to birds; submarines to fish. Advanced coatings draw heavily from nanostructures on plant-leaves, unwettable by even the heaviest rain. Camera imaging chips draw from the design of the retina, and on and on. It's called biomimetics.So here we see an emerging compute paradigm similar to the biological structure known as a ganglion: a mini-brain, a peripheral intelligence interposed between transducers in the field and central processors in the cloud.

My phone's role in my evening commute struck me. In 2016, it is the ganglion in a diffused intelligence in which I am a participant, beneficiary, transducer… and sensor.

Silicon Intelligence

Looking back at 2015, perhaps the most remarkable thing I read was a Google Research blog post entitled “Inceptionism: Going Deeper into Neural Networks” (http://googleresearch.blogspot.co.uk/2015/06/inceptionism-going-deeper-into-neural.html).This described recent work in correlative recognition using the specialized compute structure called a neural network. These systems learn by observing large data sets. Their predictive capability self-adjusts over time via observation and feedback (http://www.dspguide.com/ch26/4.htm).

And, when idle, they dream. That Google Research blog post presented otherworldly phantasms imagined by the neural networks when ruminating over image databases.

Haunting stuff, not least because the phantasms seem so brilliantly dream-like. The stuff of a dawning intelligence. The stuff of nightmares.

What recognitions, then, might such an intelligence draw if fed something close to the sum of human thought and behavior? In 2016, I think we will begin to find out, because portals to that exist today. They are specialized in various ways: consider the databases of a Google, an Amazon, an NSA. Immense insights await the hungry brain capable of observing it all.

Silicon Consciousness

There’s more. The difference between intelligence and consciousness is simply being-in-the-now. Observing and correlating databased truth is one thing, and it allows inferences and recognitions to be drawn. But observing and correlating the world as it happens is quite another thing, and this is the natural agenda of Internet of Things as it unfolds, whether its participants are aware of it or not. This is the stuff of consciousness, not just intelligence.And, in 2016, it is here.

Silicon Precognition

Philip K. Dick’s 1956 short story, “The Minority Report” (later turned into an engaging movie), centered on mutants capable of foreseeing crime. In 2016 we need no mutants: we have the germ of silicon consciousness animated by silicon intelligence, leavened by a critical mass of human thought and behavior, and illuminated by a real-time sensorial feed from exponentiating millions of sensor-equipped minions like me, just going about our days. Given such a vast and dense sense of history and now present, the leap to foreseeing the future is a minor one.And an inevitable one.

Consider again who those big-data players are.

Consider again the real value of big data in 2016.

Biomimetics, writ large

Throughout history, successful innovations have often paralleled biological systems. And so the beehive enjoys a collective consciousness, animated and leavened and illuminated by its throngs of worker bees as they go about their day. Occasionally one will find a promising flower-patch and will convey news of the bounty back to the hive by pheromone and even through coded dance.And so I arrived home, finding my family freshly returned from a wine-tasting expedition in the hills behind us. My day’s meetings had gone well; the Pinot Noir they brought home is truly excellent. The brain in the cloud observes my copacetic pulse-rate, the relaxed sway of my motions, the contemplative tap-tap of my keyboard.

What is my coded dance?

This essay was originally posted on Patricia Seybold's customers.com site.